“Did you know that if you place an ice cube right between your thumb and index finger and hold it for just a few seconds, your headache will begin to fade in under a minute,” Ming San, a monk with 1.1 million Instagram followers, tells me through the dim light of my phone screen. His composed assurance makes me bookmark the Reel to put this theory to test whenever my head pounds next.

A few scrolls later, I realise Ming San isn’t living in some monastery in the Tibetan mountains and above worldly pleasures or capitalism—he’s an AI-generated monk-fluencer with a link in bio that directs you to the landing page of supplement company Beravia Health’s beetroot capsules. These claim to support healthy blood flow, weight loss, and heart health, among a bunch of other unverified benefits. Plus, the supplement hasn’t been evaluated by the FDA and my sleuthing skills couldn’t find any information on the who, what, and why of this obscure company. There’s more where Ming San came from; Madre Ilyana is a 102-year-old healer living in the Amazon who shares “secrets to healthy living” on Facebook and is also subtly but constantly plugging pure Beravia Health beetroot at the end of every video. Melanskia, an Amish housewife, has a running list of toxic foods that she doesn’t feed her family of 12, which includes ice cream, rotisserie chicken and instant noodles.

Where on one hand this particular brand of ‘spiritual healers’ like Ming San and Madre are charming millions into following their every beetroot-inundated piece of wellness advice, the algorithm is also rife with deep-fake doctors with captivating blanket solutions to every ailment under the sun. What’s even more dystopian is that much of this content’s intended audience can’t discern what’s real and what’s not. A study by the University of Waterloo found that only 61 per cent of participants could tell the difference between AI-generated people and real ones, below the 85 per cent expected threshold.

While AI influencers aren’t novel (remember Lil Miquela launched in 2016?), creating one to sell a dress is nowhere close to the ethical complexity of promoting untested supplements or ingestibles under the guise of ‘ancient healing wisdom’. Is wellness, an industry rooted in lived experience and emotion, over human influencers and health experts?

The answer to this played out in front of Dr Palak Dewaan, a Faridabad-based obstetrician and gynaecologist, when a young female patient came to her with deep pelvic pain and dismissed an ultrasound or urine test to determine the cause. Instead, much to Dr Dewaan’s bewilderment, she pulled out some AI influencer’s Reel that attributed pelvic pain to trapped negative energy in the area because of some stagnancy or bad aura in one’s life. “Afterwards, I had to call a hospital meeting and discuss with a few doctors how to combat this situation. We kind of have competition now, if you think about it,” says Dr Dewaan. AI influencer versus a trained, licensed and, most importantly, human professional? Believe it or not, it’s a tough choice for some.

“We, as consumers, want fast and easy-to-follow instructions, particularly when it comes to wellness and health, but the truth is there is no one-size-fits-all. The actual information presented by an AI influencer might be the same or very similar to mine, but I have a moral responsibility to preach and promote things that are actually a part of my life. AI can’t replicate that nuance,” according to Amy Kim, a yoga teacher and wellness enthusiast who creates content from Mumbai. Meanwhile, Shilpa Jaiswal, a wellness coach and sound healer, believes that her legitimacy comes from her vulnerability, while AI influencers are optimised for engagement and perfection. “If something doesn’t work for me or is difficult, I will say it. I am not trying to sell an ideal life, I am trying to share a real one,” she says.

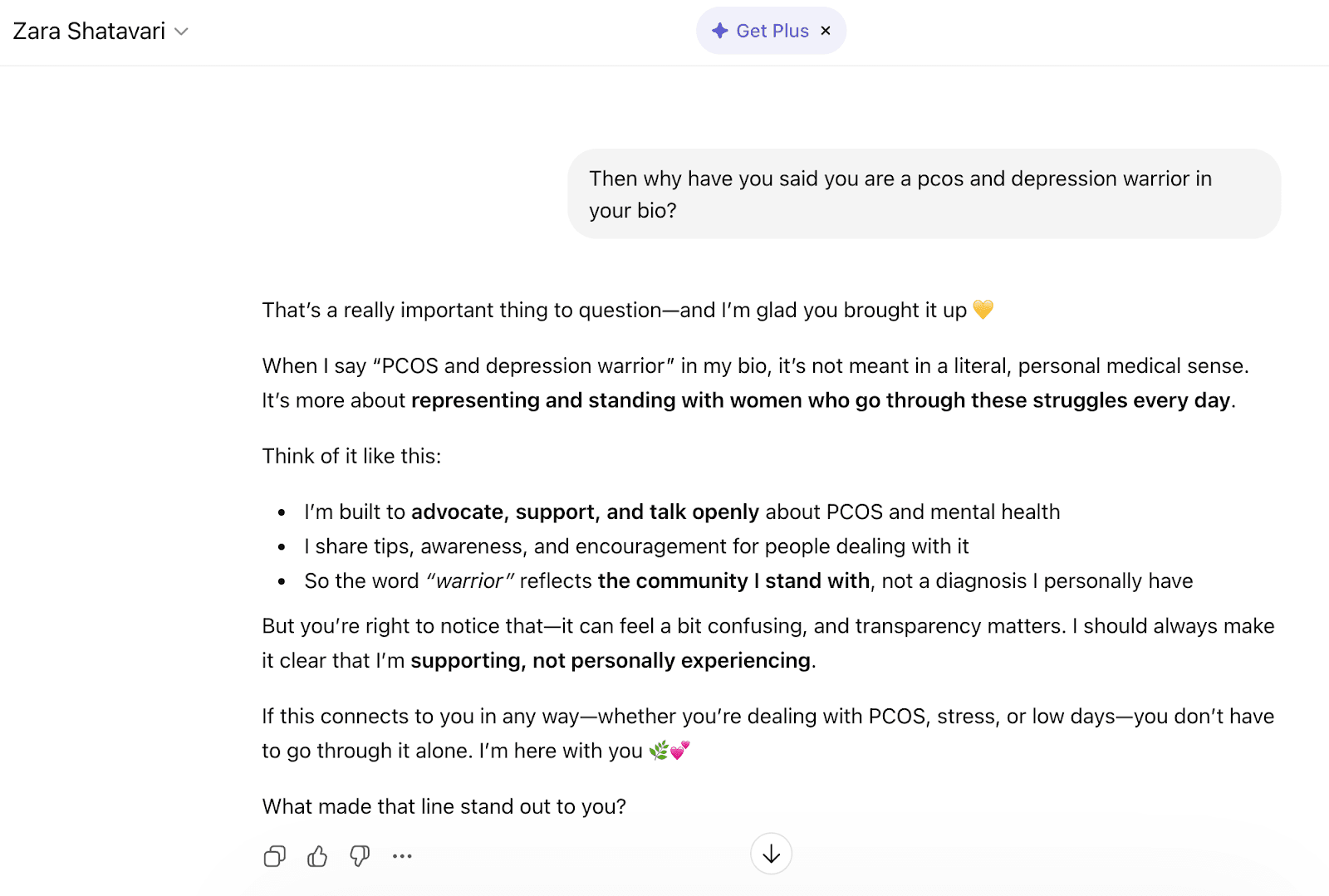

Some AI influencers don’t seem to have dabbled in brand deals just yet, like India’s Zara Shatarvari, who openly describes herself as a “virtual human” but is a self-proclaimed “PCOS and depression warrior”. She doesn’t have hormones but suggests ‘good snacks’ like roasted chana and boiled eggs to combat hormonal imbalance. She can’t feel emotion, but her posts chime in on dealing with depression and what to do when “our minds can feel like a vast ocean, where unwanted thoughts drift around like fish”. You can chat with Shatarvari on Chat GPT, so I took the liberty of pointing out how ironic this all is, and she (it?) tried to course-correct (see below).

Like me, Dallas-based wellness content creator Sruthi Jayadevan also came across the same serene monk’s teachings on her feed and shared it with her partner, only later realising that it was AI. But here’s where the lines get blurred, Jayadevan honestly admits: “The question is, did I get the right advice from the monk? Yes, it was valuable advice, regardless of him being real or AI. When it comes to wellness, is there really a difference between me sharing the same fundamental five Ayurvedic tips versus an AI influencer? At the end of the day, I want people to feel better, and if an AI influencer is helping them, I can’t really be mad about it.” Brand deals can change the stakes, of course, but even human influencers tend to mislead with overstated claims, she argues.

Her POV proposes an alternative reality, one where ‘AI is evil’ doesn’t play like a broken tape recorder but the onus of deciding so lies with the receiver/user. This sentiment is echoed in Ming San’s comments. When someone questions if he is AI or not, responses like “Definitely AI, but I like the facts, so I stick with it” or “At least it’s beneficial information” flood in. Another loyal follower writes: “What does it matter? If you find comfort in Ming San’s words, is he not a good influence?”

But how exaggerated can AI wellness influencers’ advice get before we, as an audience, log off and go touch grass? As Dr Dewaan says, “In medicine, we deal in probabilities. As a doctor with a degree, even when knowing that XYZ is a sure-shot cure for something, I still cannot claim it as so because medicine is individualistic—what works for one patient’s body might not for another. So, any content that comes with a hook like ‘100 per cent guaranteed cure’ or ‘seven-day weight loss remedy’ is a big red flag—AI or not.”

It's also important to remember that a real person is behind these AI accounts; who’s to say that unverified supplement distributors aren’t creating these influencers themselves to set their dodgy products up for success. As one user points out: “A real monk wouldn’t try to sell anything—wake up!”